At the excellent Agile on the Beach conference in Cornwall I did a presentation outlining some of the history, design and implementation of cyber-dojo.

The video has just gone live on youtube.

Travis build pipeline

I've been working on the Travis build pipeline for cyber-dojo.

The biggest gotcha I hit was that a failure in an after_success: section of a .travis.yml file does not fail the build. This was an issue because after successfully building and testing a docker image I wanted to do two things (and know if they failed):

push_and_trigger.sh looks like this:

trigger-build.js looks like this:

Note the [set -e] in push_and_trigger.sh and the [process.exit(1)] in trigger-build.js

Hope this proves useful!

- push the docker image to dockerhub

- trigger git repos dependent on the docker image so they in turn run their .travis.yml files.

...

language: node_js

script:

- ...

- curl -O https://raw.githubusercontent.com/cyber-dojo/ruby/master/push_and_trigger.sh

- chmod +x push_and_trigger.sh

- ./push_and_trigger.sh [DEPENDENT-REPO...]

push_and_trigger.sh looks like this:

#!/bin/bash

set -e

...

if [ "${TRAVIS_PULL_REQUEST}" == "false" ]; then

BRANCH=${TRAVIS_BRANCH}

else

BRANCH=${TRAVIS_PULL_REQUEST_BRANCH}

fi

if [ "${BRANCH}" == "master" ]; then

docker login --username "${DOCKER_USERNAME}" --password "${DOCKER_PASSWORD}"

TAG_NAME=$(basename ${TRAVIS_REPO_SLUG})

docker push cyberdojo/${TAG_NAME}

echo "PUSHED cyberdojo/${TAG_NAME} to dockerhub"

npm install travis-ci

script=trigger-build.js

curl -O https://raw.githubusercontent.com/cyber-dojo/ruby/master/${script}

node ${script} ${*}

fi

trigger-build.js looks like this:

...

var Travis = require('travis-ci');

var travis = new Travis({

version: '2.0.0',

headers: { 'User-Agent': 'Travis/1.0' }

});

var exit = function(call,error,response) {

console.error('ERROR:travis.' + call + 'function(error,response) { ...');

console.error(' error:' + error);

console.error('response:' + JSON.stringify(response, null, '\t'));

process.exit(1);

};

travis.authenticate({ github_token: process.env.GITHUB_TOKEN }, function(error,response) {

var repos = process.argv.slice(2);

if (error) { exit('authenticate({...}, ', error, response); }

repos.forEach(function(repo) {

var parts = repo.split('/');

var name = parts[0];

var tag = parts[1];

travis.repos(name, tag).builds.get(function(error,response) {

if (error) { exit('repos(' + name + ',' + tag + ').builds.get(', error, response); }

travis.requests.post({ build_id: response.builds[0].id }, function(error,response) {

if (error) { exit('requests.post({...}, ', error, response); }

console.log(repo + ':' + response.flash[0].notice);

});

});

});

});

Note the [set -e] in push_and_trigger.sh and the [process.exit(1)] in trigger-build.js

Hope this proves useful!

Python + behave

A big thank you to Millard Ellingsworth @millard3 who has added Python+behave to cyber-dojo.

Woohoo :-)

Woohoo :-)

cyber-dojo Raspberry Pies in action

Liam Friel, who helps to run a CoderDojoBray (in Ireland) asked me for some Raspberry Pies which I was more than happy to give him, paid for from the donations lots of you generous people have made from cyber-dojo.

Liam sent me this wonderful photo of a CoderDojoBray session and writes:

Your Raspberry Pies have been getting a lot of use... We've got 8 Pies in total. Got a reasonably steady turnout at the dojo, 75-85 kids turning up each week.

Awesome. If, like Liam, you would like some Raspberry Pies to help kids learn about coding, please email. Thanks

running your own cyber-dojo Docker server on Windows

These instructions are intended for individuals wishing to run cyber-dojo locally on their Windows laptop.

Note that these instructions are for running Docker-Toolbox for Windows.

Running Docker Desktop for Windows may or may not work.

You need to set the correct permissions for this directory.

The user-id for the saver service is 19663, and its group-id is 65533.

In the cyber-dojo server terminal, type:

Now use this cyber-dojo script, from a (default VM) cyber-dojo server terminal, to run your own cyber-dojo server.

Note that these instructions are for running Docker-Toolbox for Windows.

Running Docker Desktop for Windows may or may not work.

install Docker-Toolbox for Windows

From here.

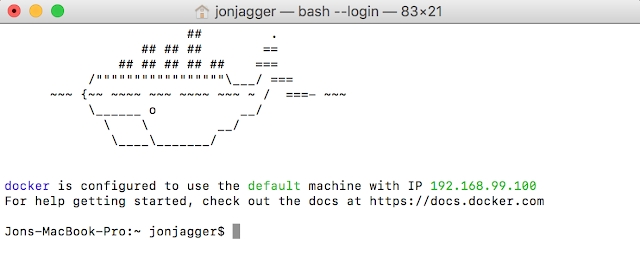

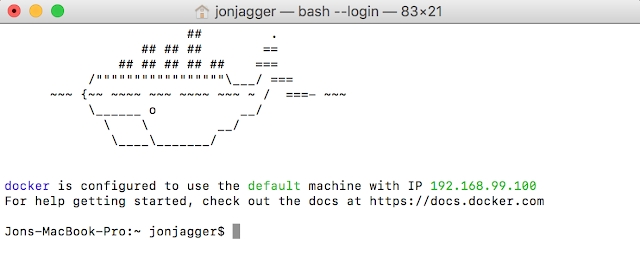

open a Docker-Quickstart-Terminal

get your cyber-dojo server's IP address

In the Docker-Quickstart-Terminal, type:$ docker-machine ip default

It will print something like 192.168.99.100

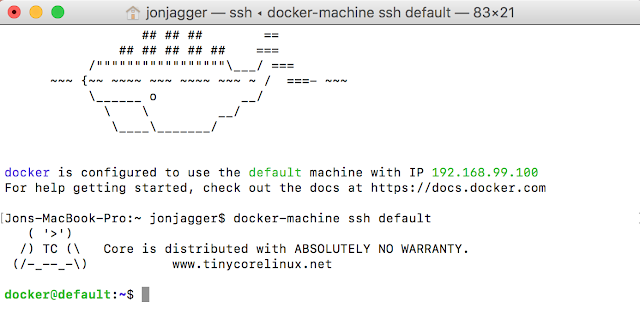

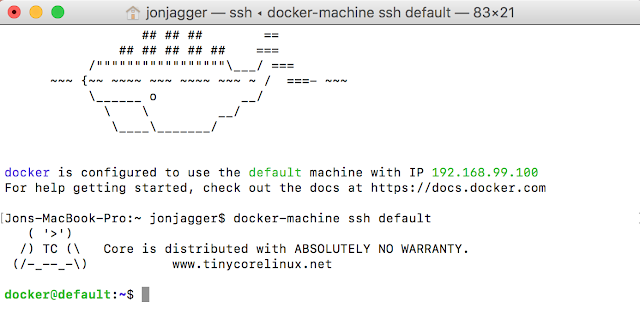

ssh into your cyber-dojo server

In the Docker-Quickstart-Terminal, type:$ docker-machine ssh default

setup directory permissions

cyber-dojo saves the practice sessions to /cyber-dojoYou need to set the correct permissions for this directory.

The user-id for the saver service is 19663, and its group-id is 65533.

In the cyber-dojo server terminal, type:

docker@default:~$ sudo mkdir /cyber-dojo

docker@default:~$ sudo chown 19663:65533 /cyber-dojo

install the cyber-dojo script

In the cyber-dojo server terminal, type:docker@default:~$ curl -O https://raw.githubusercontent.com/cyber-dojo/commander/master/cyber-dojo

docker@default:~$ chmod 700 cyber-dojo

Now use this cyber-dojo script, from a (default VM) cyber-dojo server terminal, to run your own cyber-dojo server.

running your own cyber-dojo Docker server on a Mac

These instructions are intended for individuals wishing to run cyber-dojo locally on their Mac laptop.

Note that these instructions are for running Docker-Toolbox for Mac.

Running Docker Desktop for Mac may or may not work.

You need to set the correct permissions for this directory.

The user-id for the saver service is 19663, and its group-id is 65533.

In the cyber-dojo server terminal, type:

Now use this cyber-dojo script, from a (default VM) cyber-dojo server terminal, to run your own cyber-dojo server.

Note that these instructions are for running Docker-Toolbox for Mac.

Running Docker Desktop for Mac may or may not work.

install Docker-Toolbox for Mac

From here.

open a Docker-Quickstart-Terminal

get your cyber-dojo server's IP address

In the Docker-Quickstart-Terminal, type:$ docker-machine ip default

It will print something like 192.168.99.100

ssh into your cyber-dojo server

In the Docker-Quickstart-Terminal, type:$ docker-machine ssh default

setup directory permissions

cyber-dojo saves the practice sessions to /cyber-dojoYou need to set the correct permissions for this directory.

The user-id for the saver service is 19663, and its group-id is 65533.

In the cyber-dojo server terminal, type:

docker@default:~$ sudo mkdir /cyber-dojo

docker@default:~$ sudo chown 19663:65533 /cyber-dojo

install the cyber-dojo script

In the cyber-dojo server terminal, type:docker@default:~$ curl -O https://raw.githubusercontent.com/cyber-dojo/commander/master/cyber-dojo

docker@default:~$ chmod 700 cyber-dojo

Now use this cyber-dojo script, from a (default VM) cyber-dojo server terminal, to run your own cyber-dojo server.

running your own cyber-dojo Docker server on Linux

install docker

If docker is not already installed, install it.- To install manually, follow the instructions on the docker website.

- To install with a script (via curl):

$ curl -sSL https://get.docker.com/ | sh - To install with a script (via wget):

$ wget -qO- https://get.docker.com/ | sh

add your user to the docker group

Eg, something like$ sudo usermod -aG docker $USER

log out and log in again

You need to do this for the previous usermod to take effect.setup directory permissions

cyber-dojo saves the practice sessions to /cyber-dojoYou need to set the correct permissions for this directory.

The user-id for the saver service is 19663, and its group-id is 65533.

In a terminal, type:

$ sudo mkdir /cyber-dojo

$ sudo chown 19663:65533 /cyber-dojo

install the cyber-dojo shell script

In a terminal, type:$ curl -O https://raw.githubusercontent.com/cyber-dojo/commander/master/cyber-dojo

$ chmod 700 cyber-dojo

Now use this cyber-dojo script to run your own cyber-dojo server.

tar-piping a dir in/out of a docker container

The bottom of the docker cp web page has some examples of tar-piping into and out of a container.

I couldn't get them to work.

I guess there are different varieties of tar.

The following is what worked for me on Alpine Linux. It assumes

Hope this proves useful!

- ${container} is the name of the container

- ${src_dir} is the source dir

- ${dst_dir} is the destination dir

copying a dir out of a container

docker exec ${container} tar -cf - -C $(dirname ${src_dir}) $(basename ${src_dir})

| tar -xf - -C ${dst_dir}

copying a dir into a container

tar -cf - -C $(dirname ${src_dir}) $(basename ${src_dir})

| docker exec -i ${container} tar -xf - -C ${dst_dir}

setting files uid/gid

A tar-pipe can also set the destination files owner/uid and group/gid:tar --owner=UID --group=GID -cf - -C $(dirname ${src_dir}) $(basename ${src_dir})

| docker exec -i ${container} tar -xf - -C ${dst_dir}

This is useful because unlike [docker run] the [docker cp] command does not have a [--user] option.

Alpine tar update

The default tar on Alpine Linux does not support the --owner/--group options. You'll need to:apk --update add tar

Hope this proves useful!

the design and evolution of cyber-dojo

I've talked about the design and evolution of cyber-dojo at two conferences this year.

First at NorDevCon in Norwich and then also at Agile on the Beach in Falmouth. Here's the slides.

cyber-dojo web server default start-points

This page holds the choices where you select both your language+testFramework (eg Java,Cucumber)

and your exercise (eg Print Diamond).

This page holds the customized choices (eg Tennis refactoring, Python unittest).

The language+testFramework list is served (by default) from a start-point image called languages.

The exercises list is served (by default) from a start-point image called exercises.

The custom list is served (by default) from a start-point image called custom.

A start-point image is created from a list of git-repo URLs, using the main cyber-dojo script, as follows:

The three default start-point images were created using the main cyber-dojo script, as follows:

Note the curl simply expands to a list of git-repo URLs, one for each of the most common language+testFrameworks. Each git-repo URL must contain a manifest.json file.

To use a different default start-point simply bring down the server, delete the one you wish to replace, create a new one with that default name, and bring the server back up. For example, to create a new languages start-point specifying just the Ruby test-frameworks:

This page holds the customized choices (eg Tennis refactoring, Python unittest).

The language+testFramework list is served (by default) from a start-point image called languages.

The exercises list is served (by default) from a start-point image called exercises.

The custom list is served (by default) from a start-point image called custom.

A start-point image is created from a list of git-repo URLs, using the main cyber-dojo script, as follows:

$ cyber-dojo start-point create NAME --languages <URL>...

The three default start-point images were created using the main cyber-dojo script, as follows:

$ LSP=https://raw.githubusercontent.com/cyber-dojo/languages-start-points

$ cyber-dojo start-point create languages \

--languages $(curl ${LSP}/master/start-points/common)

$ cyber-dojo start-point create exercises \

--exercises https://github.com/cyber-dojo/exercises-start-points

$ cyber-dojo start-point create custom \

--custom https://github.com/cyber-dojo/custom-start-points

Note the curl simply expands to a list of git-repo URLs, one for each of the most common language+testFrameworks. Each git-repo URL must contain a manifest.json file.

To use a different default start-point simply bring down the server, delete the one you wish to replace, create a new one with that default name, and bring the server back up. For example, to create a new languages start-point specifying just the Ruby test-frameworks:

$ cyber-dojo down

$ cyber-dojo start-point rm languages

$ cyber-dojo start-point create languages \

--languages \

https://github.com/cyber-dojo-languages/ruby-approval \

https://github.com/cyber-dojo-languages/ruby-cucumber \

https://github.com/cyber-dojo-languages/ruby-minitest \

https://github.com/cyber-dojo-languages/ruby-rspec \

https://github.com/cyber-dojo-languages/ruby-testunit

$ cyber-dojo up

cyber-dojo new release

The new release of cyber-dojo just went live :-)

- the setup start-points are now decoupled from the server and can be customized.

- the manifest.json format has changed accordingly.

- the instructions for running your own cyber-dojo server are new.

creating your own server start-points

cyber-dojo's architecture has customisable start-points.

eg with a languages start-point named adams/my_languages

eg with an exercises start-point named adams/my_exercises

eg with a combination

preparing your start-point

- Simply create a manifest.json file for each individual start-point.

eg$ mkdir -p douglas/one douglas/two $ touch douglas/one/manifest.json douglas/two/manifest.json - Edit each manifest.json files

Here's an explanation of the manifest.json format.

Here's an example. - Create a git repository in the top level directory.

eg$ cd douglas $ git init $ git add . $ git commit -m "first commit"

creating your start-point

Use the cyber-dojo script to create a new start-point naming the URL of the git repo (in this case ${PWD}). For example$ cd douglas

$ cyber-dojo start-point create adams/my_custom --custom ${PWD}

which attempts to create a custom start-point image called adams/my_custom from all the

files in the douglas directory.

If the creation fails the cyber-dojo script will print diagnostics.

starting your server with your start-point

eg with a custom start-point called adams/my_custom$ cyber-dojo up --custom=adams/my_custom

eg with a languages start-point named adams/my_languages

$ cyber-dojo up --languages=adams/my_languages

eg with an exercises start-point named adams/my_exercises

$ cyber-dojo up --exercises=adams/my_languages

eg with a combination

$ cyber-dojo up --languages=adams/my_languages --exercises=adams/my_exercises

adding a new language + test-framework to cyber-dojo

THE INFORMATION BELOW IS OUT OF DATE.

It will be updated properly soon.

Meanwhile, follow step 0 below, and then look at these examples from the cyber-dojo-languages github organization:

If you are adding a new unit-test-framework to an existing language skip this step.

For example, suppose you were building Lisp

For example, suppose your Lisp unit-test framework is called lunit

It will be updated properly soon.

Meanwhile, follow step 0 below, and then look at these examples from the cyber-dojo-languages github organization:

- repo for a docker-image for a language

- repo for a docker-image for a test-framework

0. Install docker

1. Create a docker-image for just the language

Make this docker-image unit-test-framework agnostic.If you are adding a new unit-test-framework to an existing language skip this step.

For example, suppose you were building Lisp

-

Create a new folder for your language

eg.$ md lisp -

In your language's folder, create a file called Dockerfile

$ cd Lisp $ touch DockerfileIf you can, base your new image on Alpine-linux as this will help keep images small. To do this make the first line of Dockerfile as followsFROM cyberdojofoundation/language-baseHere's one based on Alpine-linux (217 MB: C#) Dockerfile

Here's one not based on Alpine (Ubuntu 1.26 GB: Python) Dockerfile -

Use the

Dockerfileto build a docker-image for your language.

For example$ docker build -t cyberdojofoundation/lisp .which, if it completes, creates a new docker-image calledcyberdojofoundation/lispusing theDockerfile(and build context) in.(the current folder).

2. Create a docker-image for the language and test-framework

Repeat the same process, buildingFROM the docker-image

you created in the previous step.For example, suppose your Lisp unit-test framework is called lunit

-

Create a new folder underneath your language folder

$ cd lisp $ md lunit

-

In your new test folder, create a file called

Dockerfile$ cd lunit $ touch DockerfileThe first line of this file must name the language docker-image you built in the previous step.

Add lines for all the commands needed to install your unit-test framework...FROM cyberdojofoundation/lisp RUN apt-get install -y lispy-lunit RUN apt-get install -y ... -

Create a file called red_amber_green.rb

$ touch red_amber_green.rb

- In red_amber_green.rb write a Ruby lambda accepting three arguments.

For example, here is the

C#-NUnit red_amber_green.rb:

lambda { |stdout,stderr,status| output = stdout + stderr return :red if /^Errors and Failures:/.match(output) return :green if /^Tests run: (\d+), Errors: 0, Failures: 0/.match(output) return :amber }cyber-dojo uses this to determine the test's traffic-light colour by passing it the stdout, stderr, and status outcomes of the test run.

-

The Dockerfile for your language+testFramework must COPY red_amber_green.rb

into the /usr/local/bin folder of your image. For example:

FROM cyberdojofoundation/lisp RUN apt-get install -y lispy-lunit RUN apt-get install -y ... COPY red_amber_green.rb /usr/local/binI usually start with a red_amber_green.rb that simply returns :red. Then, once I have a start-point using the language+testFramework docker-image, I use cyber-dojo to gather outputs which I use to build up a working red_amber_green.rb

-

Use the

Dockerfileto try and build your language+testFramework docker-image.

The name of an image takes the form hub-name/image-name. Do not include a version number in the image-name. For example$ docker build -t cyberdojofoundation/lisp_lunit .which, if it completes, creates a new docker image calledcyberdojofoundation/lisp_lunitusing theDockerfilein.(the current folder).

3. Use the language+testFramework docker-image in a new start-point

Use the new image name (eg cyberdojofoundation/lisp_lunit) in a new manifest.json file in a new start-point.cyber-dojo start-points manifest.json entries explained

Example: the manifest.json file for Java/JUnit looks like this:

The lambda can return red, amber, or green as a :symbol or a string. It should return:

An array of two strings used to create Ruby regexs. The first one to match a red traffic light's test output, and the second one to match a green traffic light's test output.

An example for Python, unittest.

Defaults to [ ].

An array of strings. A strict subset of

Defaults to [ ].

An array of strings used to create Ruby regexs, used by cyber-dojo, like this

Defaults to [ ].

DEPRECATED. Instead, inside cyber-dojo.sh, use a trap handler to delete unwanted files. For example here's a trap handler for Ruby, Approval.

An integer between 1 and 20.

Defaults to 10.

DEPRECATED.

{

"image_name": "ghcr.io/cyber-dojo-languages/java_junit:7aa5992",

"display_name": "Java 25.0.2, JUnit 6.0.3",

"visible_filenames": [

"Hiker.java",

"HikerTest.java",

"cyber-dojo.sh"

],

"rag_lambda": "red_amber_green.rb",

"filename_extension": [ ".java" ],

"max_seconds": 10,

"tab_size": 4,

"progress_regexs" : [

"Tests run\\: (\\d)+,(\\s)+Failures\\: (\\d)+",

"OK \\((\\d)+ test(s)?\\)"

]

}

Required entries

"image_name": string

The name of the docker image used to run a container in whichcyber-dojo.sh is executed.

Do not include any version numbers (eg of the compiler or test-framework).

All packages/tools/libraries/files/etc used in cyber-dojo.sh (for example the java compiler)

must be installed in this image. When cyber-dojo.sh runs inside this image

it has no access to the internet.

"display_name": string

The name as it appears in the start-point setup pages. For example, "Java 25.0.2, JUnit 6.0.3" means that "Java 25.0.2, JUnit 6.0.3" will appear as a selectable entry when choosing your language + test-framework when setting up your practice session. A single string typically with the language name first, then a comma, then the test-framework name."visible_filenames": [ string, string, ... ]

Filenames that will be visible in the browser's editor when an animal initially enter's a cyber-dojo. Each of these files must be a plain text file and exist in the manifest.json's directory. Filenames can be in nested sub-directories, eg"tests/HikerTest.java".

Must include "cyber-dojo.sh".

This is because cyber-dojo.sh is the name

of the shell file assumed by the runner to be the start

point for running the tests. You can write any actions inside

cyber-dojo.sh

but clearly any programs it tries to run must be installed in the docker image_name.

For example, if cyber-dojo.sh runs gcc

to compile C files then gcc has

to be installed. If cyber-dojo.sh runs javac

to compile java files then javac has to be installed.

"rag_lambda": string

The filename containing a Ruby lambda returning a test's traffic-light colour (red, amber, or green) from thestdout, stderr, status

of cyber-dojo.sh.

For example, here's the one for Java-JUnit.

lambda { |stdout,stderr,status|

output = stdout + stderr

containers_pattern = Regexp.new('^\[\s+(\d+) containers failed\s+\]')

if match = containers_pattern.match(output)

if match[1] != '0'

return :amber

end

end

tests_pattern = Regexp.new('^\[\s+(\d+) tests failed\s+\]')

if match = tests_pattern.match(output)

if match[1] == '0'

return :green

else

return :red

end

else

return :amber

end

}

The lambda can return red, amber, or green as a :symbol or a string. It should return:

- green if the tests all passed

- red if a test assertion fails

- amber if there is a "non-assertion" error, eg a syntax error. This is usually straightforward for statically typed languages such as C. For dynamically typed languages such as Python it can be less straightforward - do what seems sensible.

"filename_extension": [ string, string, ... ]

The extensions of source files. The first entry is also used when creating a new filename. Must be non-empty."tab_size": int

The number of spaces a tab character expands to in the editor. Must be an integer between 1 and 12.Optional entries

"progress_regexs": [ string, string ]

Used on the dashboard to show the test output line (which often contains the number of passing and failing tests) of each animal's most recent red/green traffic light. Useful when your practice session starts from a large number of pre-written tests and you wish to monitor the progress of each animal.An array of two strings used to create Ruby regexs. The first one to match a red traffic light's test output, and the second one to match a green traffic light's test output.

An example for Python, unittest.

Defaults to [ ].

"highlight_filenames": [ string, string, ... ]

Filenames whose appearance is highlighted in the browser. This can be useful if you have many"visible_filenames" and want

to mark which files form the focus of the practice.

An array of strings. A strict subset of

"visible_filenames".

Defaults to [ ].

"hidden_filenames": [ string, string, ... ]

cyber-dojo.sh may create extra files (eg profiling stats). All text-files created under /sandbox are returned to the browser unless their name matches any of these string regexs.An array of strings used to create Ruby regexs, used by cyber-dojo, like this

Regexp.new(string).

For example, to hide files ending in .d you can use the following string ".*\\.d"

Defaults to [ ].

DEPRECATED. Instead, inside cyber-dojo.sh, use a trap handler to delete unwanted files. For example here's a trap handler for Ruby, Approval.

"max_seconds": int

The maximum number of secondscyber-dojo.sh has to complete.

An integer between 1 and 20.

Defaults to 10.

DEPRECATED.

cyber-dojo traffic-lights!

My friend Byran who works at the awesome Bluefruit Software in Redruth has hooked up his cyber-dojo web server to an actual traffic-light! Fantastic. Check out the video below :-)

Byran writes

Byran writes

It started out as a joke between myself and Josh (one of the testers at Bluefruit). I had the traffic lights in my office as I was preparing a stand to promote the outreach events (Summer Huddle, Mission to Mars, etc...) Software Cornwall runs. The conversation went on to alternative uses for the traffic lights, I was planning to see if people would pay attention to the traffic lights if I put them in a corridor at the event; we then came up with the idea that we could use them to indicate TDD test status.

Although it started out as a joke I am going to use it at the Summer Huddle, the lights change every time anyone runs a test so it should give an idea of how the entire group are doing without highlighting an individual pair.

The software setup is very simple, there is a Python web server (using the Flask library) running on a Raspberry Pi that controls the traffic lights using GPIO Zero. When the appendTestTrafficLight() function (in run_tests.js.erb) appends the traffic light image to the webpage I made it send an http 'get' request to the Raspberry Pi web server to set the physical traffic lights at the same time. At the moment the IP address of the Raspberry Pi is hard coded in the 'run_tests.js.erb' file so I have to rebuild the web image if anything changes but it was only meant to be a joke/proof of concept. The code is on a branch called traffic_lights on my fork of the cyber-dojo web repository.

The hardware is also relatively simple, there is a converter board on the Pi; this only converts the IO pin output connector of the Raspberry Pi to the cable that attaches to the traffic lights.

The other end of the cable from the converter board attaches to the board in the top left of the inside the traffic lights; this has some optoisolators that drive the relays in the top right which in turn switch on and off the transformers (the red thing in the bottom left) that drive the lights.

I have to give credit to Steve Amor for building the hardware for the traffic lights. They are usually used during events we run to teach coding to children (and sometimes adults). The converter board has LEDs, switches and buzzers on it to show that there isn't a difference between writing software to toggle LEDs vs driving actual real world systems, it's just what's attached to the pin. Having something where they can run the same code to drive LEDs and drive real traffic lights helps to emphasise this point.

a new architecture is coming

Between my

recent

fishing

trips

I have been working hard on a new cyber-dojo architecture.

- pluggable start-points so you can now use your own language/tests/exercises lists on the setup page

- a new setup page for custom start-points

- once I've got the output parse functions inside the start-point volume I'll be switching the public cyber-dojo server to this image and updating the running-your-own-server instructions.

- I've switched all development to a new github repo which has instructions if you want to try it now.

the story of cyber-dojo (so far)

The video of my ACCU 2016 talk, the story of

cyber-dojo (so far), is now up on the

ACCU youtube channel. Enjoy!

nordevcon cyber-dojo presentation

It was a pleasure to speak at the recent norfolk developers conference.

My talk was "cyber-dojo: executing your code for fun and not for profit".

I spoke about cyber-dojo, demo'd its features, discussed its history, design, difficulties and underlying technology.

Videos of the talk are now on the infoq website. The slide-sync is not right at the start of part 2 but it soon gets corrected.

running your own cyber-dojo server

This page related to an old-version of cyber-dojo.

This is the page you're looking for

This is the page you're looking for

Docker tar pipe

This blog post is now out of date. The docker tar-pipe is now executed from micro-services implemented in Ruby.

For example, see runner.

I've been working on re-architecting cyber-dojo so the web-server (written in Rails) runs in a Docker image...

My shell file started like this:

Things to note:

I realized I could avoid creating the (physical) tar file completely by using a 'proper' tar pipe:

Then I realized I could combine the two [docker exec]s into one and drop the chown...

This worked but the backgrounded sleep created a zombie process.

That's for another blog.

For example, see runner.

I've been working on re-architecting cyber-dojo so the web-server (written in Rails) runs in a Docker image...

- the server receives its source files from the browser

- it saves them to a temporary folder

- it back-ticks a shell file which

- ...puts the source files into a docker container

- ...runs the source files (by executing cyber-dojo.sh) as user=nobody

- ...limits execution to 10 seconds

My shell file started like this:

#!/bin/sh

SRC_DIR=$1 # where source files are

IMAGE=$2 # the image to run them in

MAX_SECS=$3 # how long they've got to complete

TAR_FILE=`mktemp`.tgz # source files are tarred into this

SANDBOX=/sandbox # where tar is untarred to inside container

# - - - - - - - - - - - - - - - - - - -

# 1. Create the tar file

cd ${SRC_DIR}

tar -zcf ${TAR_FILE} .

# - - - - - - - - - - - - - - - - - - -

# 2. Start the container

CID=$(sudo docker run --detach \

--interactive \

--net=none \

--user=nobody \

${IMAGE} sh)

# - - - - - - - - - - - - - - - - - - -

# 3. Pipe the source files into the container

cat ${TAR_FILE} \

| sudo docker exec --interactive \

--user=root \

${CID} \

sh -c "mkdir ${SANDBOX} \

&& tar zxf - -C ${SANDBOX} \

&& chown -R nobody ${SANDBOX}"

# - - - - - - - - - - - - - - - - - - -

# 4. After max_seconds, remove the container

(sleep ${MAX_SECS} && sudo docker rm --force ${CID}) &

# - - - - - - - - - - - - - - - - - - -

# 5. Run cyber-dojo.sh in the container

sudo docker exec --user=nobody \

${CID} \

sh -c "cd ${SANDBOX} && ./cyber-dojo.sh 2>&1"

# - - - - - - - - - - - - - - - - - - -

# 6. If the container isn't running, the sleep woke and removed it

RUNNING=$(sudo docker inspect --format="{{ .State.Running }}" ${CID})

if [ "${RUNNING}" != "true" ]; then

exit 137 # (128=timed-out) + (9=killed)

else

exit 0

fi

Things to note:

- The container is started in detached mode. This is so I can get its CID and setup the backgrounded sleep task (4) before running cyber-dojo.sh (5)

- I use [sudo docker] because I do not put the current user into the docker group. Instead I sudo the current user to run the docker binary without a password.

- The first [docker exec] user is root but this is root inside the CID container not root where the shell file is being run.

- I can pipe STDIN from the shell into the container

- The sleep task (4) kills the container if it runs out of time and step (6) detects this.

I realized I could avoid creating the (physical) tar file completely by using a 'proper' tar pipe:

#!/bin/sh

...

(cd ${SRC_DIR} && tar -zcf - .) \

| sudo docker exec --interactive \

--user=root \

$CID \

sh -c "mkdir ${SANDBOX} \

&& tar -zxf - -C ${SANDBOX} \

&& chown -R nobody ${SANDBOX}"

...

- [tar -zcf] means create a compressed tar file

- [-] means don't write to a named file but to STDOUT

- [.] means tar the current directory

- which is why there's a preceding cd

- [tar -zxf] means extract files from the compressed tar file

- [-] means don't read from a named file but from STDIN

- [-C ${SANDBOX}] means save the extracted files to the ${SANDBOX} directory

Then I realized I could combine the two [docker exec]s into one and drop the chown...

...

# - - - - - - - - - - - - - - - - - - -

# 1. Start the container

CID=$(sudo docker run \

--detach \

--interactive \

--net=none \

--user=nobody \

${IMAGE} sh)

# - - - - - - - - - - - - - - - - - - -

# 2. After max_seconds, remove the container

(sleep ${MAX_SECS} && sudo docker rm --force ${CID}) &

# - - - - - - - - - - - - - - - - - - -

# 3. Tar pipe the source files into the container and run

(cd ${SRC_DIR} && tar -zcf - .) \

| sudo docker exec --interactive \

--user=nobody \

${CID} \

sh -c "mkdir ${SANDBOX} \

&& cd ${SANDBOX} \

&& tar -zxf - -C . \

&& ./cyber-dojo.sh"

# - - - - - - - - - - - - - - - - - - -

# 4. If the container isn't running, the sleep woke and removed it

RUNNING=$(sudo docker inspect --format="{{ .State.Running }}" ${CID})

if [ "${RUNNING}" != "true" ]; then

exit 137 # (128=timed-out) + (9=killed)

else

exit 0

fi

This worked but the backgrounded sleep created a zombie process.

That's for another blog.

Subscribe to:

Posts (Atom)